Excerpt: In an interview, Lt Gen Jack Shanahan shares his insights on the future development and employment of artificial intelligence and machine learning.

Approximate Reading Time: 17 minutes

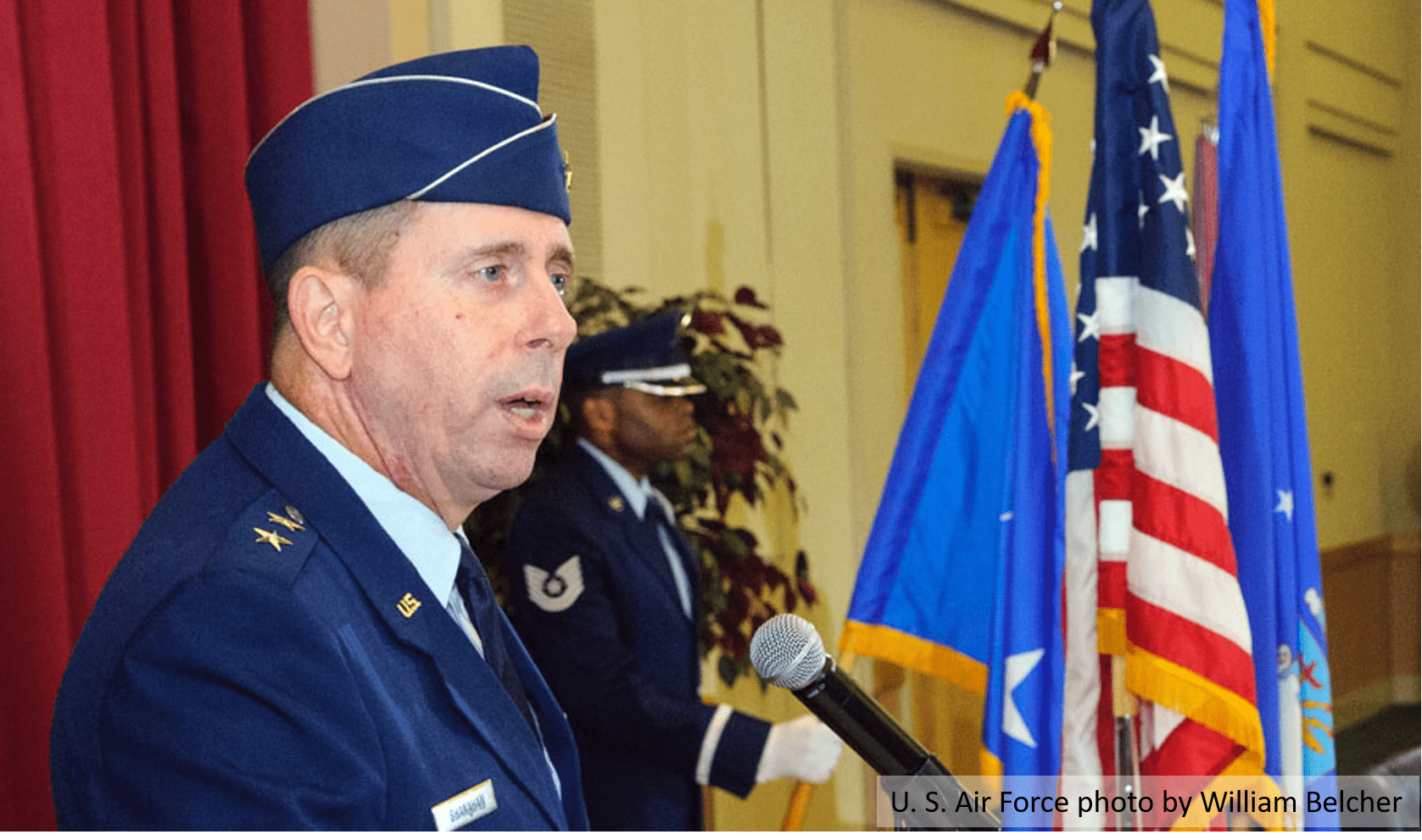

Editor’s Note: OTH had the distinct pleasure of interviewing Lt Gen Jack Shanahan, USD(I)’s Director for Defense Intelligence (Warfighter Support), in November of 2017. Through this conversation, Lt Gen Shanahan provides his insight into the DoD’s critical work on artificial intelligence (AI) and machine learning (ML). Part I of this interview is presented here and Part II will be published Wednesday. Unfortunately, technical difficulties prevented the opening exchanges from being recorded. The interview picks up with Lt Gen Shanahan describing one of his top priority initiatives, Project Maven, an effort to establish an algorithmic warfare cross-functional team.

Lt Gen Jack Shanahan (JS): It’s not an easy thing to describe to people so we use Project Maven as a substitute most of the time. Project Maven was a project that already existed, with Will Roper and the Strategic Capabilities Office working various artificial intelligence (AI) / machine learning (ML) initiatives for overhead imagery. We joined forces with Dr. Roper and proceeded under the umbrella Project Maven.

One of our initial efforts looked at the issue of having too much Full Motion Video (FMV) for analysts to process, exploit, and disseminate (PED). We were able to go out into industry to find innovative solutions. This is industry we are talking about; not the Department of Defense. This type of work is really being done in industry. I do not denigrate in any way the research labs which have incredibly smart people looking at artificial intelligence, but nowhere near the scale or the timelines we needed…which was fast.

Beginning in April, we began using artificial intelligence, machine learning, computer vision to address one problem set: FMV from tactical unmanned aerial surveillance (UAS). The intent is to deliver a solution by the end of this year. So, we are already barely 7 months into this and will deliver the first algorithms within the next three weeks to the first deployed special operations units working in Africa. From there, we intend to deliver MQ-1/MQ-9 algorithms the following spring. Then we have a different project we will focus on which is more of a PhD-level challenge, the Gorgon Stare Wide Area Motion Imagery (WAMI) sensor. For WAMI, AI is essential because analysts cannot possibly look at the entire scene that this incredible sensor produces.

In seven months, we have been able to put on contract a data labeling company. This is one of the key lessons learned and something industry has told us over and over… “Data is everything!” It is everything. The algorithms, those are mathematics and computer science. Data, however, is the gold… which you need lots and lots of. We have come up with the process to obtain the data, pre-process the data, and then we had to label the data. Getting the DoD labeling enterprise going was another challenge all by itself. So now, we have a data labeling company that has the software for data labeling who provided us with training. After that, we were able to get four start-up vendors on contract at the same time. Two of the cases are Silicon Valley vendors with the other two being similar to Silicon Valley start-ups. These are small companies, nimble, and ready to go out. I call this the Darwinism approach, where four companies develop algorithms using the same data we give them. Interestingly enough, they each come up with four different algorithms, some of which will be optimized for the full motion video and some won’t be. We’ll probably hold them in reserve and use them for many of the other problems that we have to go after.

Also, we have got a big company, one of the best big known internet data companies, we are still working with to go after this MQ-9 WAMI challenge. We really need their software engineering expertise and their algorithm development because it is such a challenging problem. All of that has happened in seven months. We just went through testing and validation; now we are doing testing, evaluation, and putting the algorithms on tactical UASs. Once loaded on the UAS, we are flying them in this thing called Minotaur which is geo registration. Analysts already have bounding boxes and confidence levels show up on a screen, which is great, but now analysts really need this geo registration piece which will allow for targeting and other operational activities.

Our intent is to deliver a finished product in December. And, we are still on track. We have had a thousand different hurdles to jump over, none of which have been easy, but we are the pilot project and continue to work through it with MQ-1, MQ-9, and WAMI. After that, we have all sorts of ideas.

Although USD(I) is leading the effort, Deputy Secretary of Defense Robert O. Work stated at the time he initiated this effort that, “This is not an intelligence thing. This is a department thing, but nobody else is doing it fast and at scale, so you’re it. And, once we figure out what to do, we will figure out a transition plan for the Department of Defense. Where does this live? Does a joint program office own it?”

Over the Horizon (OTH): That is fascinating and perhaps reassuring. Does this potentially mean that we can out evolve our adversaries or maintain a distinct capabilities advantage, which is obviously a concern throughout DoD?

JS: This is not a case where we’re going to offset somebody. We will, however, be offset if we do not do it. It is as simple as that.

In as stark terms as I can put it, Chinese are investing billions of dollars in artificial intelligence, machine learning, and these kinds of advancements. They are very public about it. Their 13th 5-year plan says this explicitly, and they have a long-standing history of following through on things they say in their strategic plans.

They are going after AI in a big way. And, they have access to data because they just take it and have invested heavy venture capital into Silicon Valley. They’re going after AI and ML. If we do not do it, we will be on the outside of that OODA (observe, orient, decide and act) loop looking in.

OTH: Shifting gears a little. Algorithmic warfare and Project Maven points to a future operating environment that looks quite different from our current paradigm. These developments portend an operational environment where the joint force operates in a different way. If all of this comes to fruition, how does it change our operational construct? How does this impact how the joint force operates?

JS: We have to learn how to be a lot nimbler and more agile when it comes to AI. When it comes to algorithms, they will likely be obsolete or not as useful after six months. We are actually using the term “prototype warfare” which I think is an apt description of the mindset we are talking about. In other words, we must have rapid delivery, even though we know something is not perfect. For example, we know these first algorithms are not completely perfect. In fact, we are planning on them not being exactly right, so we are planning three “sprints.” Sprint one, two and three. Sprint one will involve a relatively limited number of object classes, a limited number of platforms, and a limited number of threshold and objective requirements. By the time we get to sprint three, those categories will all have expanded significantly.

As important as anything else to me, however, is the idea of having user engagement from the very beginning. We have analysts that are sitting down with software engineers from the very beginning. For the engineers, its an education on how the algorithms and tools will be employed operationally. I expect the analysts will go from saying that they had no idea what the tools were to having a much better understanding of what they can do with the tools and how it can improve their analysis.

One of the questions that we have been asking is, “where does the line go from invention to innovation?” That phrase is from Derrick Thompson who wrote a piece in the Atlantic and recently brought this point up on Google X. The inventions happen with the vendors and in the research labs, but what we really intend to do is turn this stuff over to the analyst. I cannot tell you a year from now what they are going to do with it. But, it will probably be completely different than how we anticipated the algorithms being used because they’re going to say, “well that doesn’t work well, let’s do it this way, or let’s try this.”

Unfortunately, I have a feeling that for the first couple of months, we are going to have FMV analysts staring at a screen where the algorithm are tracking and identifying objects perfectly well. It will be the worst of both worlds in that you’ll have artificial intelligence working great, doing what it is supposed to be doing with an analyst also staring at a screen. It will be just like when the military first used heads up displays (HUDs). People said, “Oh, I can’t use a HUD. I’m use to looking at dials.” Well that lasted for about an hour, until they figured out how to use it. Analysts and operators will do the same thing today until they figure it out and transform what we deliver into something so much different. I look forward to that day.

To make that happen, however, you must have operator engagement from the very beginning. This world we are going into is nimble, moves faster, and we must be willing to accept less than perfect from the beginning.

I do have a lot of thoughts when you talk about how this changes the future environment. First of all, I would say that if we do not accept the fact that data is a strategic asset, we will cede time and space to our adversaries. That is a given. And, I think we can accelerate the OODA loop to drive toward a quicker Observe and Orient piece of it. Decide and Act may or may not be faster, it depends. It comes down to commanders interpreting the information they are getting from human-machine interface. So, when you ask me what am I optimistic about in the future? It is this whole idea of human-to-machine to machine-to-machine teaming.

I am just enamored with Gary Kasparov’s book “Deep Thinking” because he is the person that lost to Deep Blue. The loss was visceral to him; he was frustrated by it. It took him years to accept the fact that a machine beat him. He said he sat there and tried to look into the machine’s eyes, but there were no eyes. Chess is a human game and you have human behavior that you try to absorb from your opponent. But, for Deep Blue, this did not exist. It took Kasparov years to really get over that experience. The book is all about the human-to-machine future. As he said, “It’s not the grand master. It’s not the machine. It’s the grand master and the machine, and superior processes.” You string those together then you will win. One by itself, you might win, but your chances are much worse than having the three in combination.

So, to me, this idea of human-machine teaming could segue into machine-to-machine teaming as we progress down this path toward autonomy. By the way, we are so far from full autonomy at this moment in history. I give no thought to the Elon Musk’s of the world coming up with optimized AI.

For now, my world is focused on solving a full motion video problem and then getting to document exploitation, targeting, and some other things. War and warfare are still human endeavors. It is still about humans fighting, taking territory, and all those things. But, we have arrived at a time where things are happening much faster. Humans now require machines to do what machines do best, so that humans can do what only humans do which is the reasoning part, it’s the contextual piece.

Broad AI, however, is so far away. Like I stated, I do think about the human-machine teaming. In fact, we are careful when using the word automate for analysis. The last thing we need is for decision makers to be pulling people away before we are ready. I do not believe we have ever shown that there is a people savings. Instead, what I want to do is augment the analyst. I want to get the analysts time back to do difficult analysis; the hard, analytical work. Analysts need more time to think, to red team, and to consider contrarian views… rather than staring at full motion video screens. That’s the future. I see AI as augmenting analysts or operations, and amplifying analysis. AI does not replace the analysts.

OTH: OTH conducted an interview with Dr. Jon Kimminau who discussed AI and expressed some of the same sentiments. Dr. Kimminau indicated that he believes that AI will ultimately provide flexibility to allow humans to focus more intently the creative aspect of their analytical craft.

I would like to delve a little more into human-machine teaming. During an OTH interview with Vice Admiral Jan Tighe, we discussed this topic at length. In your estimation, how do we shape the human-machine teaming construct? And, what does human-machine teaming look like now and in the future? I think we got a kind of sense of the foundations for your thoughts on it. But, how do you see human-machine teaming employed on operational teams in the field?

JS: We will not know until analysts have the ability to actually try it (i.e. delivering algorithms into the hands of operators). You can define operators as anybody from an intelligence analyst, to sensor operators, to somebody with a gun in their hand. What we will need to learn is how can an operator take advantage of what the machine is doing to make the operator perform better.

Again, I do expect as human nature has not changed since we first started walking the planet, it will be a rough start. It will be rough mostly because people will not see the full potential of what these systems are. Some will. There will be some visionaries out there who will say at the very beginning, “I can’t wait to get this in my hands, here is what I want to do with it.” But that’s not most people. Most people just view it as having a job to do, and really just want to know how he or she can do their job better. So, it’s going to take a while.

There are also many other factors and considerations. For the intelligence enterprise, I’ll share a few thoughts on what else needs to happen behind the scenes to allow this real vision of accepting data as a strategic asset and allowing human machine teaming to become a reality. First, I will focus on intelligence. You have this proposition that every analyst needs all potentially relevant data from every possible source. Obviously, that is a sweeping statement. Dr. Kimminau and I have talked about this idea before. How do we go to a new balance between what I would call the classic deductive analysis or analytic approach and the inductive approach? There are different ways of coming at this; we just have to look at the differences now that I really can get access to more data.

Of course, data today is unstructured. It is in different formats. It is often quite hard to get access to. There are privacy act considerations and all sorts of things we must work through to get to the data. At the same time, as soon as you say AI, you have to also consider counter-AI. This consideration is something I always want to throw in any of these conversations about AI. How do we use data to deceive an adversary and how are our adversaries going to use data to deceive us? Whether you call it information operations, information environment, counter-AI, or something else… There will always be opportunities to do things with AI to fool algorithms, to deceive algorithms, to destroy algorithms. We have to be thinking about the counter-AI piece. For what it is worth, we are thinking about counter-AI. I won’t get into any more than that.

Second, data is no longer an information technology problem. IT systems should be framed around the operational problems they’re trying to solve, which means baking in AI. My vision of the future is that no weapons system is ever produced in the Department of Defense again without having AI built into it. We are a long way from that and have a lot of work to do before that can be realized.

Given this world of AI, however, what do I need to do differently with my data? What’s my IT architecture? We’re realizing pretty fast that you need clouds that are optimized for AI and machine learning, not clouds optimized for storing — they are different things. Cloud architectures are the part of the success story for AI.

So, how do you get cloud assess down to the tactical edge? There are ways to do it, but we’re just now kind of exploring that with all the cloud experts in the world. We always have to remember there is a disadvantaged user somewhere out in a fox hole that when he or she hears the word AI goes “I don’t know and I don’t care.” We’ve got to get the data down to support them with algorithms at the tactical edge. Beyond algorithms on the processing workstations, we have to put them out on the platforms and sensors themselves. We must start building platforms and sensors with AI built into them. Its just like autonomous vehicles today, there’s a master AI above all of that. In the Tesla vehicle for example, there is all kinds of AI below the master AI, to allow it to do everything that autonomous vehicle needs to do, to not hit things and kill people.

Third, this idea of publically available information, open source information, is going to provide a first layer foundational intelligence that changes the intelligence paradigm. After all, it no longer has to be classified at the highest possible level to be perceived as valuable intelligence. So take open source, publically available information and use that as your foundational knowledge and add that with your exquisite sources. Not everybody is comfortable with that way of doing business, so that again is a shift in how people are approaching intelligence.

I already mentioned this, but I just want to reemphasize it. To get the analytical workforce away from this industrial-age, production-line way of processing and exploiting single collection streams to an information enterprise where you have some people, maybe not all because you still need depth not just breadth, doing multi and all-source correlation and fusion that’s integrated with joint, national, international partners. This means human and artificial intelligence systems sense-making are needed to deliver time and space for decision-making.

Again, now I’m back to the human-machine teaming part of it which is about that sense-making part. Get me through the Observe and Orient piece faster; get me to the time I need to Decide and Act. It may be quicker, it may not. But, I just want to get to Decide and Act faster, then let me decide how fast we want to move.

Then there is this whole other, what I would call more administrative or bureaucracy, part that has to happen to reflect the world of AI and machine learning. How we recruit people, how we train people, how we retain them, how we get them out to industry, how industry puts people in our spaces, how we do research and development, on and on again. What we’re really seeking, what we are really trying to do, beginning with Maven right now, is building an AI-ready culture across the Department of Defense. We don’t know exactly what that means right now, but this is one of those industries that is moving faster than DoD. The more we get DoD involved, then the more we’ll begin to put these algorithms in the hands of operators, and come up with new and creative ways of doing business.

Editor’s Note: Part II of this interview will be published Wednesday.

Lt Gen John N.T. “Jack” Shanahan currently serves as the Director for Defense Intelligence (Warfighting Support) (DDI(WS)), Office of the Under Secretary of Defense for Intelligence. Prior to this assignment he commanded the 25th Air Force at Joint Base San Antonio-Lackland, Texas, and served as the Commander of the Service Cryptologic Component. General Shanahan earned his commission in 1984 as a distinguished graduate of the University of Michigan ROTC program. He is a master navigator with more than 2,800 flying hours in the F-4D/E/G, F15E, and RC-135. He has served in a variety of flying, staff, and command assignments.

This interview was conducted on 16 November 2017 by OTH Editor in Chief Maj Sean Atkins and Senior Editor Maj Jerry “Marvin”Gay.

The views expressed in this interview do not necessarily reflect the official policy or position of the Department of the Air Force or the U.S. Government.

Leave a Reply